Agentic AI Compliance for Financial Services: Scale Fast, Audit with Confidence

AI agent compliance is the ability to demonstrate, in real time and retrospectively, that every AI agent operated within its authorized scope, accessed only data it was entitled to access, and produced a complete, auditable record of each interaction. Most financial services firms deploying agentic AI today cannot do this. That gap is about to become expensive.

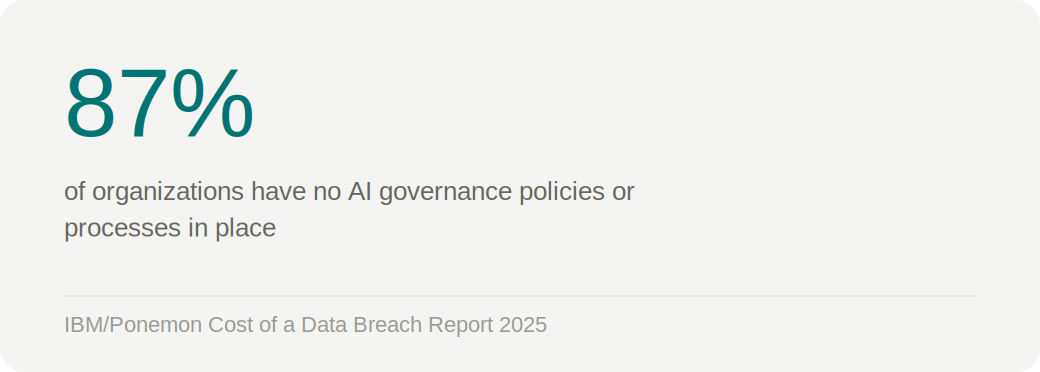

The IBM/Ponemon 2025 Cost of a Data Breach Report found that 87% of organizations have no AI governance policies or processes in place. In the United States, average breach costs hit an all-time high of $10.22 million in 2025, driven in part by steeper regulatory penalties. Shadow AI incidents added an average of $670,000 per breach. These aren't abstract risks. They're the direct financial consequence of deploying AI without the infrastructure to govern it.

For financial services firms, the stakes are compounded by a regulatory environment that is moving fast and asking specific questions about agentic AI for the first time.

What Financial Services Regulators Are Asking About Agentic AI

FINRA's 2026 Annual Regulatory Oversight Report is the clearest signal yet that regulators are no longer treating agentic AI as a future concern. The report formally classifies AI agents as a distinct supervisory risk category and identifies four primary risk vectors: agents acting without human validation; scope and authority exceeding what users intended; auditability challenges in multi-step reasoning chains; and potential misuse of sensitive client data.

For compliance teams, the practical implication is direct. FINRA recommends that firms deploying agents capable of acting or transacting implement narrow scope, explicit permissions, complete audit trails of all agent actions, and human checkpoints before execution. That's not a general policy recommendation. It's a technical architecture requirement, and it has to be built into how agents access data, not bolted on afterward.

SOX presents a parallel challenge. When AI agents query financial databases, generate reports, or feed data into decision-support workflows, those interactions touch the same data assets that SOX was designed to protect. The question auditors will increasingly ask is not just whether the data was accurate, but whether the access was authorized, purposeful, and documented. An agent that inherits broad service account credentials and queries freely across financial datasets cannot answer that question.

The same logic applies to data residency requirements. An agent operating across multi-cloud environments, pulling from Snowflake in one region and SQL Server in another, can inadvertently move regulated data across jurisdictional boundaries. Without runtime policy evaluation that enforces residency constraints at the data layer, compliance exposure accumulates silently.

The Agentic AI Compliance Gap in Financial Services

The core challenge is architectural. Traditional compliance frameworks were built around human intent and human accountability. A human analyst queries a database, their credentials are logged, and their action is attributable. When an AI agent queries that same database, on behalf of a human who may not have explicitly authorized that specific data access, the accountability chain breaks.

Compliance officers in financial services are now being asked to answer questions that their current tooling wasn't designed to support. Which agent queries violated our data confidentiality policies? What data did this agent access on behalf of this user last Thursday? Can we demonstrate to auditors that no agent exceeded its authorized scope during this reporting period?

Without a system designed to track agent behavior at the query level, correlated to user identity and data classification, those questions don't have answers. And in a post-FINRA-2026 environment, not having answers is itself a compliance risk.

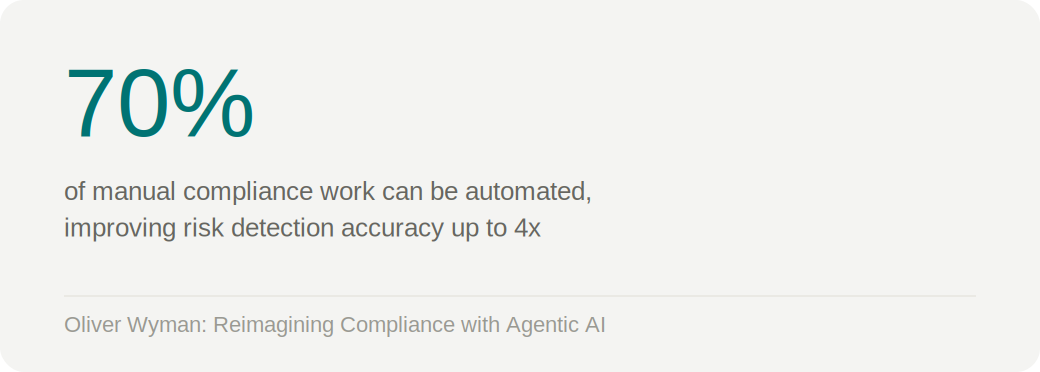

Oliver Wyman research found that automating up to 70% of manual compliance work can improve risk detection accuracy by as much as four times while enabling continuous, real-time regulatory monitoring. The firms building that capability now, with agentic AI as a governed, auditable layer rather than a shadow one, will lead on both adoption and audit readiness.

What Audit-Ready Agentic AI Actually Requires

Audit-ready agentic AI in financial services requires four capabilities working together. Each addresses a specific point in the compliance accountability chain.

- The first is a complete, immutable audit trail. Every data request made by an AI agent must be logged with the full context: which user initiated the request, which agent executed it, which data source was queried, which specific tables and fields were accessed, and under what policy authority. That record needs to be queryable, not just stored. A compliance officer who needs to investigate an agent interaction from six weeks ago should be able to reconstruct it in minutes, not days.

- The second is behavioral baselining. An audit trail tells you what happened. Behavioral baselining tells you whether what happened was normal. By establishing learned patterns for each agent, including typical query types, data volumes, and access patterns, the system can flag deviations automatically: an agent querying tables outside its normal scope, joining datasets it has never accessed before, or issuing queries at volumes inconsistent with its intended function. These deviations are the early signal of misconfiguration, credential misuse, or an emerging security event.

- The third is natural language investigation. The volume of agent activity in a scaled agentic deployment exceeds what any compliance team can review manually. Analysts need the ability to ask questions in plain language and get precise answers from the underlying telemetry. "Which agent queries touched PII data outside the authorized scope?" should return an actionable result, not require a SQL query against a raw log table.

- The fourth is automated policy enforcement with real-time alerting. Compliance at scale can't depend on human review after the fact. Policy violations need to be detected, alerted, and where possible prevented, in real time. That means streaming alerts to SIEM platforms and communication tools like Microsoft Teams, so investigation can begin in seconds rather than hours.

How TrustLogix Delivers Compliance-Ready Agentic AI

TrustAI by TrustLogix operationalizes all four of these requirements as the policy control plane between enterprise data platforms and AI frameworks, governing every agent interaction before data is returned. At its core is the TrustAI MCP Gateway, the active control point for every tool call, which brokers MCP and agent-to-agent traffic, discovers agents and tools across the enterprise, and enforces identity propagation in-flight. Every request is evaluated by the PBAC Policy Decision Point against five dimensions: user, agent, task, data, and context. Nothing moves without a policy decision.

That policy decision engine drives the full compliance architecture. Every agent interaction is logged to the complete User → Agent → Query → Data accountability chain. Behavioral baselining runs continuously, with deviations streamed as alerts to Splunk, Microsoft Teams, or any SIEM platform the firm already uses. The TrustAI Copilot gives compliance analysts a natural language interface to the underlying telemetry: ask "Which agent queries violated our data confidentiality policies?" and get a specific, actionable answer, not a raw log dump. Policy enforcement happens natively at the data source, with row-level filtering, field masking, and output redaction applied before data enters the agent's context, so violations are prevented rather than just detected. And when an incident requires immediate action, TrustAI's Kill Switch provides a real-time mechanism to revoke all agent access at the data layer instantly, without waiting for a manual IAM update to propagate.

For a leading financial services institution that partnered with TrustLogix, the compliance outcomes were concrete. The firm can now demonstrate to auditors exactly how sensitive financial and personal data was accessed or processed by autonomous agents: which agent, on behalf of which user, against which dataset, under what policy authority. The audit gap that existed before TrustLogix, the inability to answer the accountability questions regulators will ask, is closed.

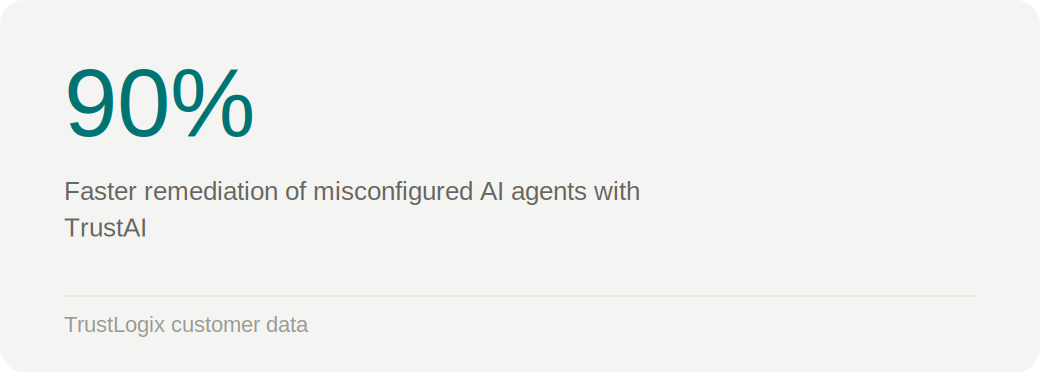

Remediation of misconfigured agents accelerated by 90%. Provisioning time dropped from weeks to minutes. And the firm's agentic AI program scaled rather than stalling, because the security team had the visibility and control they needed to approve deployments confidently rather than block them by default.

The Gartner Market Guide for DSPM explicitly calls for extending data security posture management to include agentic AI, mapping data flows through AI pipelines, and analyzing data access in agentic contexts. TrustAI is built to deliver exactly that, as a native extension of the TrustLogix data security platform rather than a separate point solution.

The Firms That Governed AI First Will Be The Ones that Scale the Fastest

There is a version of agentic AI adoption in financial services that ends with a breach, a failed audit, or a regulatory action. That version is characterized by fast deployment, minimal governance, and the assumption that security can be addressed later.

There is another version where security and AI adoption move together, where every agent deployment comes with the access controls, audit trails, and behavioral monitoring to make it defensible. That version scales faster, not slower, because security teams can approve rather than block, and compliance teams can answer rather than guess.

The firms building that foundation now have a compounding advantage. Every governed deployment generates the behavioral baseline data that makes the next one safer and faster to approve. The infrastructure investment pays for itself, in avoided breach costs, in accelerated audit cycles, and in the organizational confidence to keep scaling.

The question isn't whether your agentic AI program will face regulatory scrutiny. It will. The question is whether you'll be ready to answer when it does.

See how TrustLogix helps financial services firms build audit-ready agentic AI infrastructure. Request a demo.

Stay in the Know

Subscribe to Our Blog